k8s中负载均衡器【ingress-nginx】部署

- 作者: 我的天呐

- 来源: 51数据库

- 2021-08-08

在kubernetes中,服务和pod的ip地址仅可以在集群网络内部使用,对于集群外的应用是不可见的。为了使外部的应用能够访问集群内的服务,在kubernetes 目前 提供了以下几种方案:

nodeport

loadbalancer

ingress

本节主要就ingress和ingress控制器ingress-nginx-controller的部署作简单介绍和记录。

以下系统组件版本:

云服务器:centos版本7.6.1810、k8s版本1.15.0、docker版本18.06.1-ce、ingress-nginx-controller版本0.25.0

ingress

ingress 组成?

将nginx的配置抽象成一个ingress对象,每添加一个新的服务只需写一个新的ingress的yaml文件即可

将新加入的ingress转化成nginx的配置文件并使之生效

ingress controller

ingress服务

ingress 工作原理?

- ingress controller通过和kubernetes api交互,动态的去感知集群中ingress规则变化,

- 然后读取它,按照自定义的规则,规则就是写明了哪个域名对应哪个service,生成一段nginx配置,

- 再写到nginx-ingress-controller的pod里,这个ingress

controller的pod里运行着一个nginx服务,控制器会把生成的nginx配置写入/etc/nginx.conf文件中, - 然后reload一下使配置生效。以此达到域名分配置和动态更新的问题。

ingress 可以解决什么问题?

动态配置服务

如果按照传统方式, 当新增加一个服务时, 我们可能需要在流量入口加一个反向代理指向我们新的服务. 而如果用了ingress, 只需要配置好这个服务, 当服务启动时, 会自动注册到ingress的中, 不需要而外的操作.

减少不必要的端口暴露

配置过k8s的都清楚, 第一步是要关闭防火墙的, 主要原因是k8s的很多服务会以nodeport方式映射出去, 这样就相当于给宿主机打了很多孔, 既不安全也不优雅. 而ingress可以避免这个问题, 除了ingress自身服务可能需要映射出去, 其他服务都不要用nodeport方式

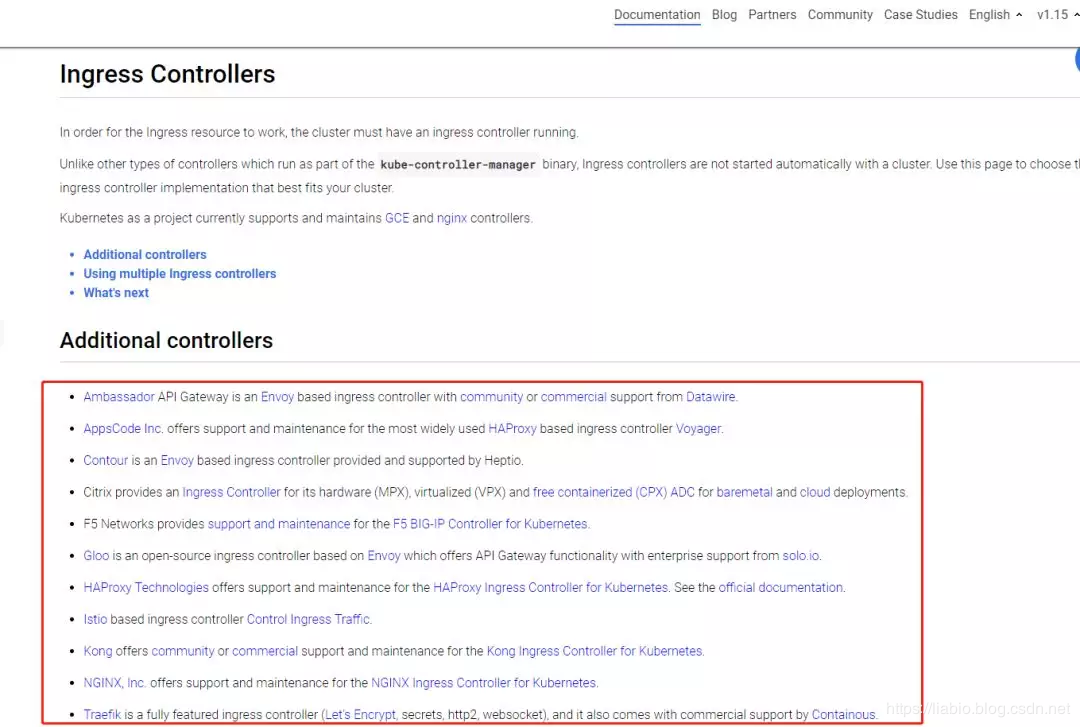

ingress当前的实现方式?

ingress-nginx-controller

目前最新版本的ingress-nginx-controller,用lua实现了当upstream变化时不用reload,大大减少了生产环境中由于服务的重启、升级引起的ip变化导致的nginx reload。

以下就ingress-nginx-controller的部署做简单记录:

yaml如下:

kubectl apply -f {如下文件}

apiversion: v1

kind: namespace

metadata:

name: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: configmap

apiversion: v1

metadata:

name: nginx-configuration

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: configmap

apiversion: v1

metadata:

name: tcp-services

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: configmap

apiversion: v1

metadata:

name: udp-services

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

apiversion: v1

kind: serviceaccount

metadata:

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

apiversion: rbac.authorization.k8s.io/v1beta1

kind: clusterrole

metadata:

name: nginx-ingress-clusterrole

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apigroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

verbs:

- list

- watch

- apigroups:

- ""

resources:

- nodes

verbs:

- get

- apigroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apigroups:

- ""

resources:

- events

verbs:

- create

- patch

- apigroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses

verbs:

- get

- list

- watch

- apigroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses/status

verbs:

- update

---

apiversion: rbac.authorization.k8s.io/v1beta1

kind: role

metadata:

name: nginx-ingress-role

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apigroups:

- ""

resources:

- configmaps

- pods

- secrets

- namespaces

verbs:

- get

- apigroups:

- ""

resources:

- configmaps

resourcenames:

# defaults to "<election-id>-<ingress-class>"

# here: "<ingress-controller-leader>-<nginx>"

# this has to be adapted if you change either parameter

# when launching the nginx-ingress-controller.

- "ingress-controller-leader-nginx"

verbs:

- get

- update

- apigroups:

- ""

resources:

- configmaps

verbs:

- create

- apigroups:

- ""

resources:

- endpoints

verbs:

- get

---

apiversion: rbac.authorization.k8s.io/v1beta1

kind: rolebinding

metadata:

name: nginx-ingress-role-nisa-binding

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

roleref:

apigroup: rbac.authorization.k8s.io

kind: role

name: nginx-ingress-role

subjects:

- kind: serviceaccount

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

---

apiversion: rbac.authorization.k8s.io/v1beta1

kind: clusterrolebinding

metadata:

name: nginx-ingress-clusterrole-nisa-binding

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

roleref:

apigroup: rbac.authorization.k8s.io

kind: clusterrole

name: nginx-ingress-clusterrole

subjects:

- kind: serviceaccount

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

---

apiversion: apps/v1

kind: deployment

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

spec:

replicas: 1

selector:

matchlabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

annotations:

prometheus.io/port: "10254"

prometheus.io/scrape: "true"

spec:

serviceaccountname: nginx-ingress-serviceaccount

containers:

- name: nginx-ingress-controller

image: quay.io/kubernetes-ingress-controller/nginx-ingress-controller:0.25.0

args:

- /nginx-ingress-controller

- --configmap=$(pod_namespace)/nginx-configuration

- --tcp-services-configmap=$(pod_namespace)/tcp-services

- --udp-services-configmap=$(pod_namespace)/udp-services

- --publish-service=$(pod_namespace)/ingress-nginx

- --annotations-prefix=nginx.ingress.kubernetes.io

securitycontext:

allowprivilegeescalation: true

capabilities:

drop:

- all

add:

- net_bind_service

# www-data -> 33

runasuser: 33

env:

- name: pod_name

valuefrom:

fieldref:

fieldpath: metadata.name

- name: pod_namespace

valuefrom:

fieldref:

fieldpath: metadata.namespace

ports:

- name: http

containerport: 80

- name: https

containerport: 443

livenessprobe:

failurethreshold: 3

httpget:

path: /healthz

port: 10254

scheme: http

initialdelayseconds: 10

periodseconds: 10

successthreshold: 1

timeoutseconds: 10

readinessprobe:

failurethreshold: 3

httpget:

path: /healthz

port: 10254

scheme: http

periodseconds: 10

successthreshold: 1

timeoutseconds: 10

---

在墙内会拉取不到以下镜像:

quay.io/kubernetes-ingress-controller/nginx-ingress-controller:0.25.0

可以使用国内阿里云镜像:

docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/nginx-ingress-controller:0.25.0

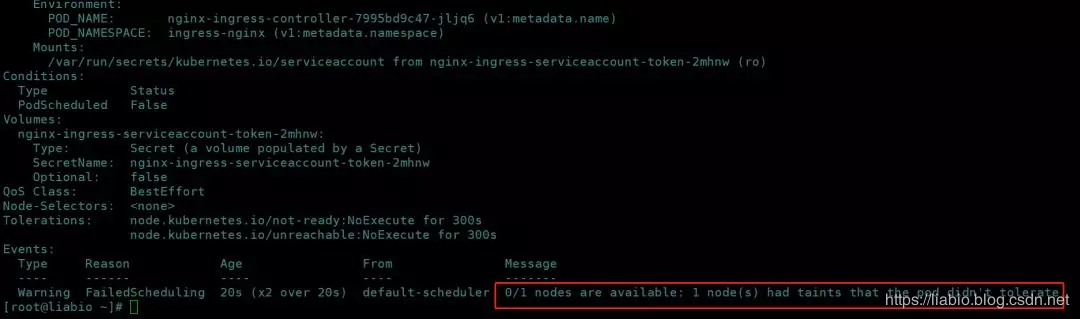

安装后,在ingress-nginx命名空间下可以看到pod一直pending,describe pod报如下警告:

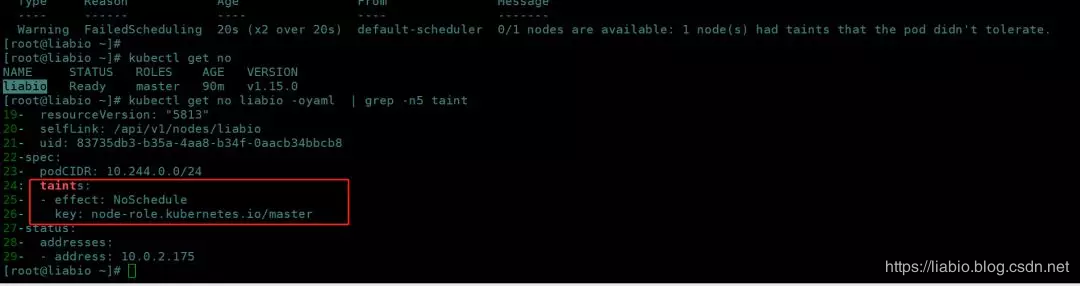

查看master节点默认加了污点,一般不允许pod调度到master节点:

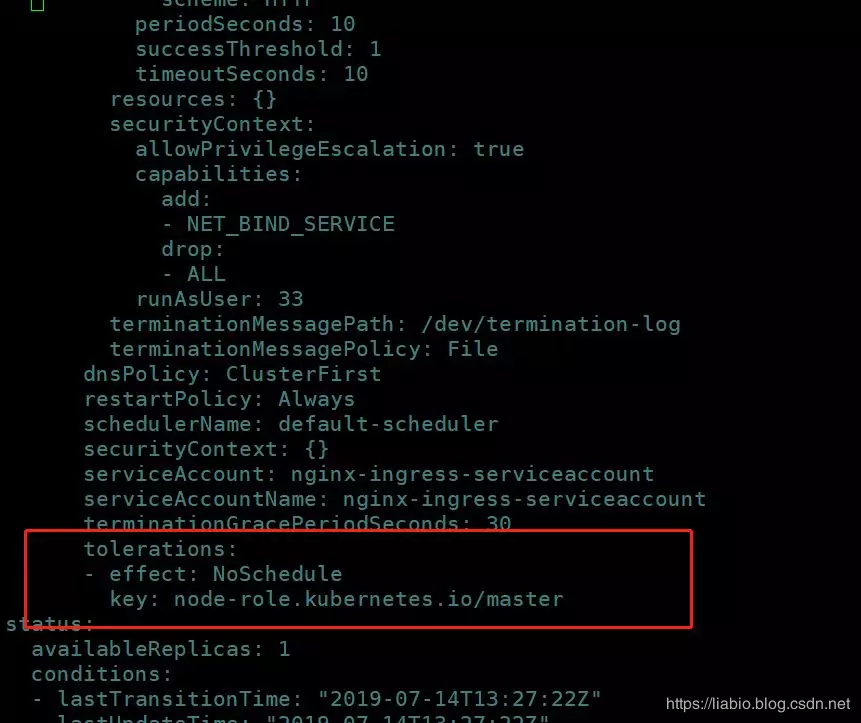

如果k8s集群只有一个节点,可以在pod的spec下设置容忍该污点:

即:

spec:

tolerations:

- effect: noschedule

key: node-role.kubernetes.io/master

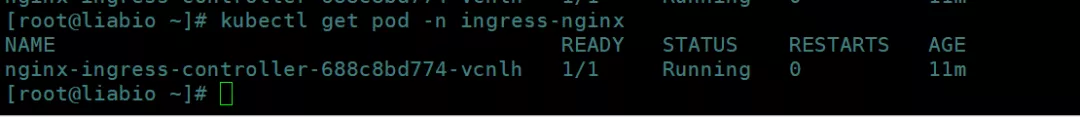

可以看到ingress-nginx pod被调度到master节点,且变为running

看日志报以下警告:

w0714 13:31:04.883127 6 queue.go:130] requeuing &objectmeta{name:sync status,generatename:,namespace:,selflink:,uid:,resourceversion:,generation:0,creationtimestamp:0001-01-01 00:00:00 +0000 utc,deletiontimestamp:<nil>,deletiongraceperiodseconds:nil,labels:map[string]string{},annotations:map[string]string{},ownerreferences:[],finalizers:[],clustername:,initializers:nil,managedfields:[],}, err services "ingress-nginx" not found

需要创一个名为ingress-nginx的service:

kubectl apply -f {如下文件}

kind: service

apiversion: v1

metadata:

name: ingress-nginx

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

spec:

externaltrafficpolicy: local

type: loadbalancer

selector:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

ports:

- name: http

port: 80

targetport: http

- name: https

port: 443

targetport: https

参考:

ingress deploy

taint和toleration(污点和容忍)

k8s 1.12部署ingress-nginx

本公众号免费提供csdn下载服务,海量it学习资源,如果你准备入it坑,励志成为优秀的程序猿,那么这些资源很适合你,包括但不限于java、go、python、springcloud、elk、嵌入式 、大数据、面试资料、前端 等资源。同时我们组建了一个技术交流群,里面有很多大佬,会不定时分享技术文章,如果你想来一起学习提高,可以公众号后台回复【2】,免费邀请加技术交流群互相学习提高,会不定期分享编程it相关资源。

扫码关注,精彩内容第一时间推给你